[ad_1]

Azure Functions are – comparable to AWS Lambda or Google Cloud Functions – event-based serverless functions that can be executed as required. The trigger for an Azure Function can be an HTTP request for the web API interface. An incoming IoT stream, a database change or execution at a specified time can also be used as triggers.

What are Azure Functions?

Depending on the requirements, the provider of Azure Functions (Microsoft) provides the underlying resources of the cloud infrastructure dynamically and scales them proportionally to demand. With this dynamic, demand-driven provision of the serverless functions, users only pay for the number of executions, the execution time and the memory consumption in the consumption plan (Hosting). The practical example at the end of the article shows the costs that can arise.

When using serverless functions, developers benefit from the fact that they no longer have to worry about the infrastructure, i.e. the provision and maintenance of servers – instead, they can concentrate fully on the development process of their application. However, you should be careful not to make the code too complex so that the serverless function can react quickly to the trigger or the triggering event at any time.

COVID-19: A practical example

As a rule, Azure Functions are used in conjunction with other cloud services. However, they are also recommended in two exemplary scenarios in solo use:

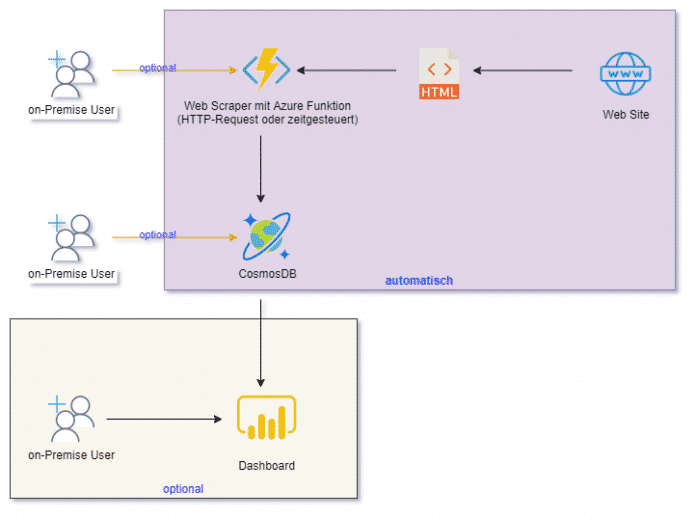

- Time-controlled web scraping and persistence of information in a cloud database such as Cosmos DB for later processing or presentation on a dashboard (see Fig. 1)

- Web API of an AI model (Azure Function triggered by HTTP request)

The Azure documentation describes in detail creating an Azure Function in the Command Line Interface (CLI) and in Visual Studio Code. This article takes up the documentation and shows how an Azure function can be expanded into a web scraper with the HTTP trigger in a few simple steps. Then it is connected to Cosmos DB in order to then make it available as a time-controlled Azure function in the cloud. There she automatically collects information from the Internet on a daily basis.

[ad_2]